Prominent Topics

-

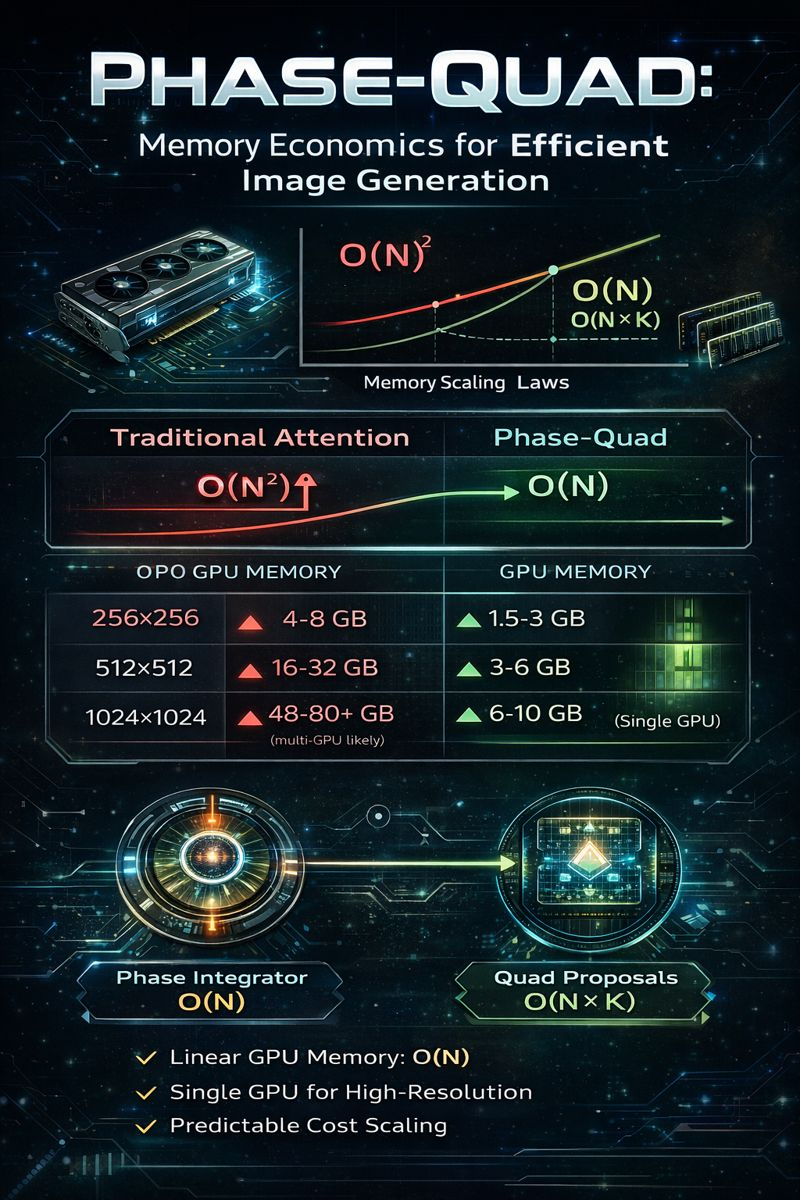

Phase–Quad Memory Economics

Why Linear Memory Changes the Cost of Image and Language Generation Modern generative AI systems—large language models and image/video generators—are increasingly constrained not by raw compute, but by memory bandwidth and memory growth. As models scale to longer contexts and higher resolutions, the dominant cost driver becomes how much information must be stored, moved, and

-

An Architectural Shift from Tokens to Cognition

Modern language models are extraordinary pattern learners. Trained on massive corpora, they predict tokens with remarkable fluency—often giving the impression of understanding. Yet beneath this fluency lies a fundamental limitation: most models continuously recompute relevance, rather than accumulate meaning. As models scale, this limitation becomes increasingly visible. Long contexts strain compute budgets. Reasoning degrades over

-

Two Paths Toward AGI: Scale vs. Structure

The race toward Artificial General Intelligence is not a single-track competition. It is unfolding along two fundamentally different trajectories. OpenAI’s Path: Scale, Integration, and Deployment OpenAI is advancing AGI through: This approach excels at breadth: general competence, fluency, and robustness across many domains. It leverages enormous resources and engineering discipline to push the limits of